The Value Of Service Mesh

The Road to Service Mesh

When Microservices architecture pattern started to evolve, the development community was happy to be able to innovate faster with smaller services each with a defined scope and functionality. This change was not just a code change but led to team structural changes to adapt more with the new architecture.

No doubt that VMs helped a lot in feeding this architecture design, but it really started to explode with Containers and then Kubernetes in the mix. Now as a developer you don't need to wait to create new VMs, configure them and then run your code, Kubernetes made things easier, but ..

Unless you're a developer with intentions to know more, Kubernetes networking and infrastructure layer is still a big deal. On the enterprise side even if you have the knowledge and skill, you are probably blocked or not allowed to do such changes.

What is Service Mesh

Service Mesh is a step towards giving more control and visibility to the development, operations and SRE teams, Service Mesh, is an abstraction layer that gives fine grained control over the communication between microservices in the mesh, and on top of that it gives a lot of visibility features out of the box.

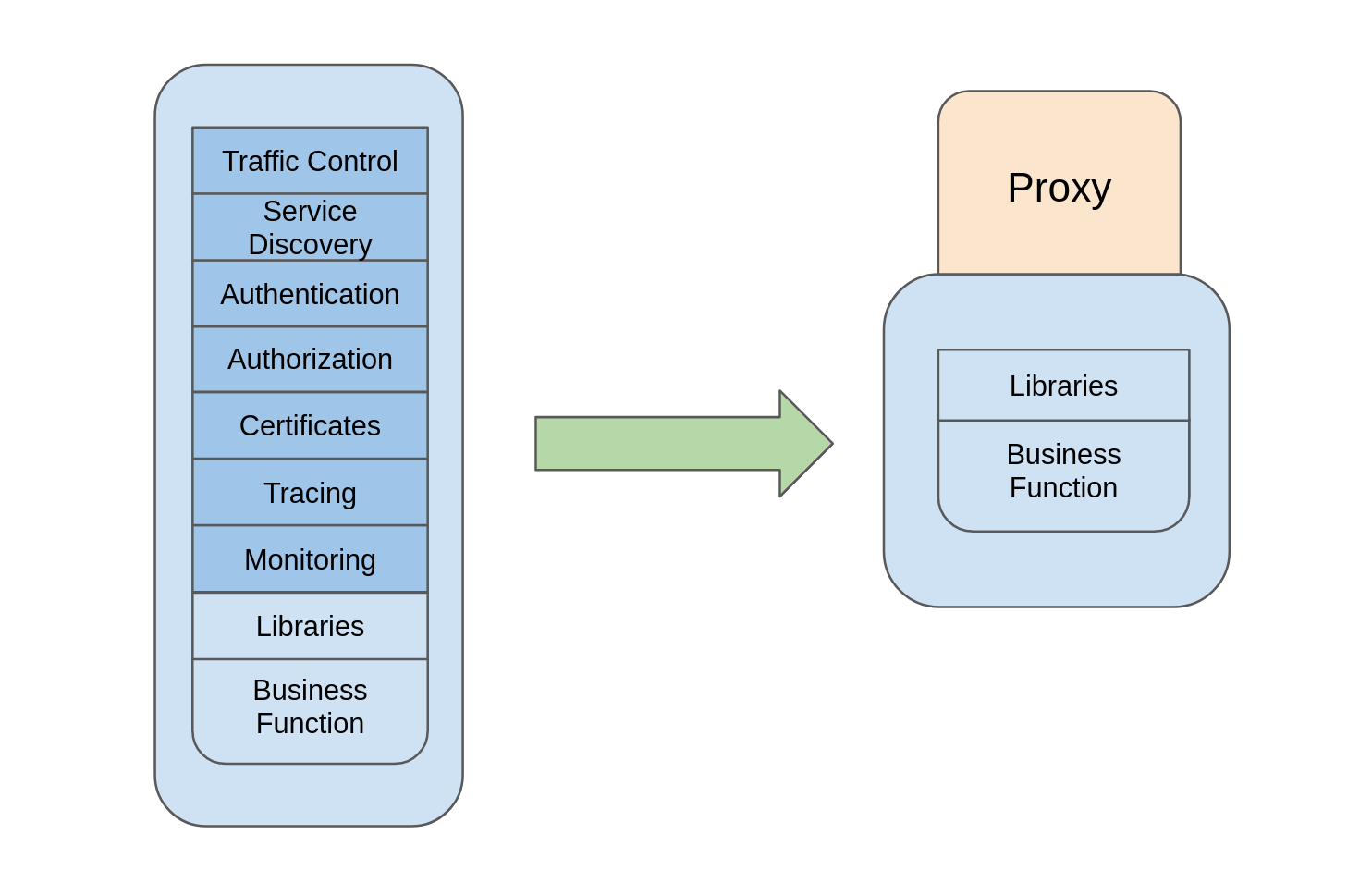

A typical microservice will need to implement operational functionalities that are not the core function or don't add to the business value such as:

- Secure the traffic with TLS (HTTPS)

- Handle authentication certificates

- Develop authentication mechanism

- Apply Authorization Rules and Role Based Access Control (RBAC)

- Service discovery mechanism like Netflix OSS or Kubernetes DNS

- Distributed Tracing solution that can trace requests across different microservices so you can debug and make sure the flow is as expected.

- Metrics and Observability

- And probably more

All the above points, and the development team didn't write a single line of code that really focuses on handling the business logic.

On the operation side, what will happen if one of your services is down? Will you notice that? What is the defense mechanism that you implemented to avoid traffic overload ?

Architects and developers need to implement those operational scenarios in the code from one service to the other, these are probably known patterns but you still need to write code to handle scenarios such as:

- Failover Mechanisms

- Retrial Mechanisms

- Timeouts

- Circuit Breakers

- etc.

Service Mesh Benefits

Service Mesh can provide all the above without the need of writing additional code, the result is a cleaner, simpler and smaller code that focuses more on business goals, enhanced products and has better experience.

Keeping your microservice really "micro" can also enhance performance and reduce latency to import and load your container images.

From an operational perspective, traffic management is the core functionality of Service Mesh, and it controls this by injecting a proxy server that intercepts all the traffic to the adjacent service and acts upon it based on the configuration that you provide to the mesh.

In case of Istio Service Mesh Envoy proxy is used which is a layer 7 proxy server that can understand application protocols such as http and read it's headers. The traffic interception in addition to the configuration provided allow the proxy to handle capabilities like security, authorization retries and other capabilities mentioned above away from your application code.

The traffic control allows you to inject failures, and by implementing mechanisms like circuit breakers you can totally block the service in case of an unexpected number of requests..

Service Mesh offers add-ons that help with live graphical representation of what is currently happening within the mesh. This visible representation of traffic between microservices can help you detect any undesired behaviour of your application and using simple declarative configuration can mitigate those behaviors.

From SRE perspective, implementing chaos engineering, chaos testing and fault injections are practices that test the behavior of system components under undesired changes to check and guarantee service resiliency or maximum latency. Service Mesh technology allows such practices by declarative configuration changes and lets you observe live results of how different system components behave.

How about a better way to deploy new features and test them with live traffic? Canary deployments allow the deployment of a new version of the service along with the old one and with traffic control configuration you can forward a portion of the live traffic. WIth layer 7 proxies you can split the traffic by percentage or by user segments, something like Android users Vs iPhone users etc..

Live testing and the ability to revert back in case of issues, is a function that you may have noticed while using Google or Facebook, when your friend got a new change while it didn't reach you yet. This type of testing enforces better user experience and more innovative teams that are more close to their customer needs and behaviors.

Business Value of Service Mesh

The business value that Service Mesh brings to teams and enterprises elevates over the above mentioned technological advantages. Enabling your development and operation teams with better technology to help them with day to day work will turns to benefits to your organization in terms of:

- Better development experience.

- Enhanced live visibility.

- Stable applications tested in various scenarios and able to revert back in a sec.

- Enrich the innovation culture among the team.

- Increase the time focussed on business value and user experience compared to the time focussed in solving team problems.

- Faster time to market with fine small releases.

- Higher availability to your application leads to better user experience.

Have you started your journey with Service Mesh yet?